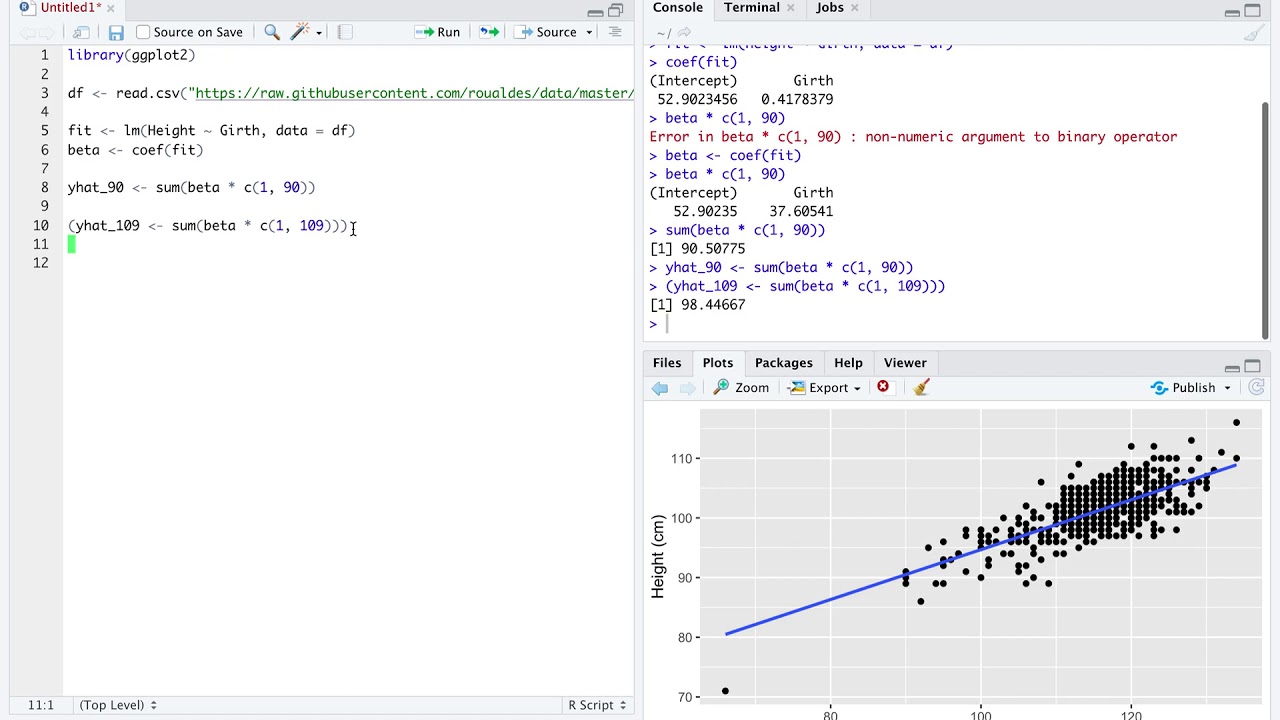

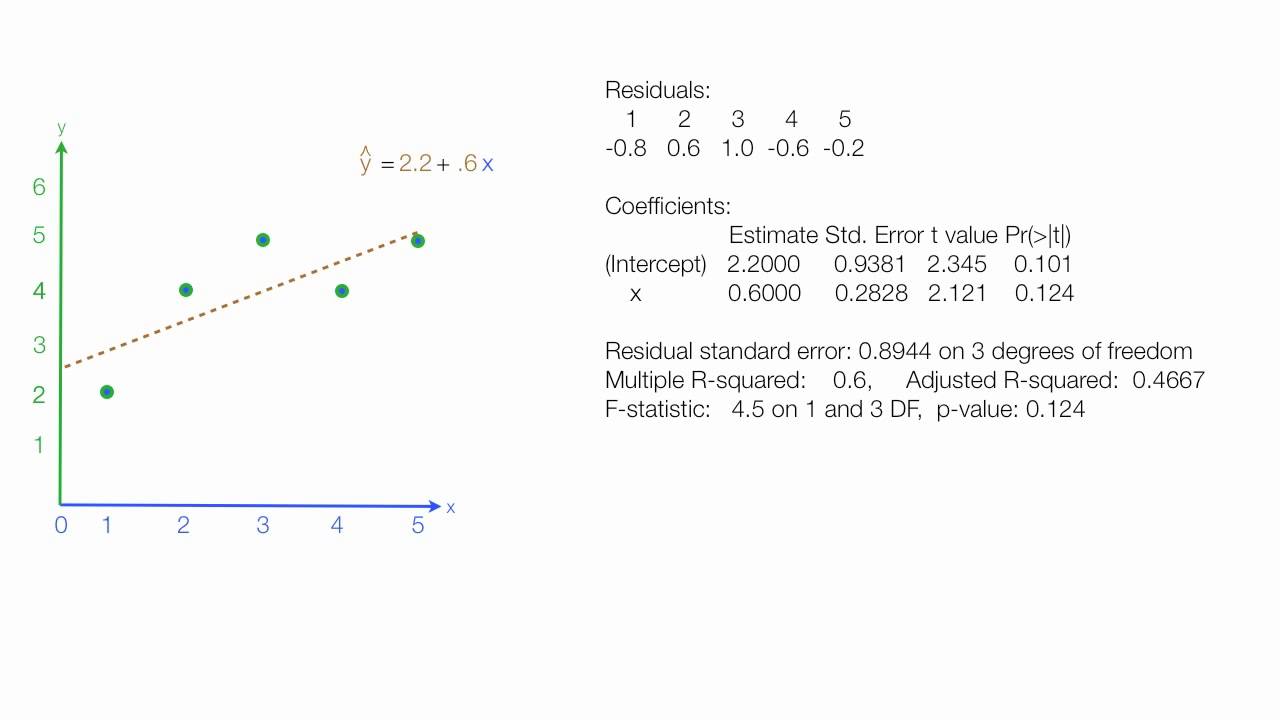

The linear regression summary printout then gives the residual standard error, the, and the statistic and test. Instead of using the standard p-value of, we can use the Bonferroni correction and divide by the number of hypothesis tests, and thus set our p-value threshold to. In this case we are making five hypothesis tests, one for each feature and one for the coefficient. However, note that when we care about looking at all of the coefficients, we are actually doing multiple hypothesis tests, and need to correct for that. If this probability is sufficiently low, we can reject the null hypothesis that this coefficient is. Under the t distribution with degrees of freedom, this tells us the probability of observing a value at least as extreme as our. This is the p-value for the individual coefficient. Assuming that is Gaussian, under the null hypothesis that, this will be t distributed with degrees of freedom, where is the number of observations and is the number of parameters we need to estimate.

Which tells us about how far our estimated parameter is from a hypothesized value, scaled by the standard deviation of the estimate. Here we can see that the entire confidence interval for number of rooms has a large effect size relative to the other covariates. Based on this, we can construct confidence intervals That is, assuming all model assumptions are satisfied, we can say that with 95% confidence (which is not probability) the true parameter lies in.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed